Machine Learning Security

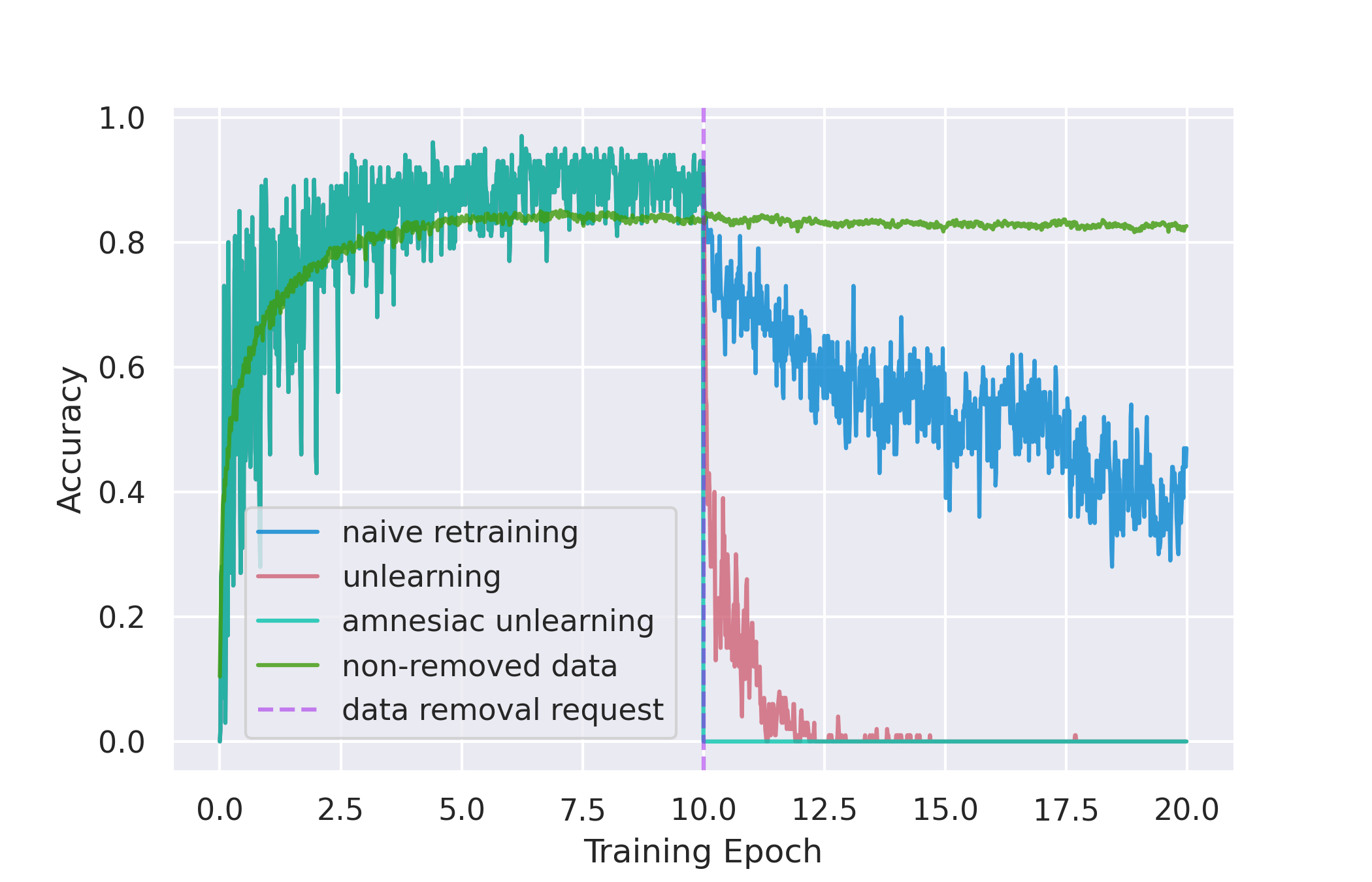

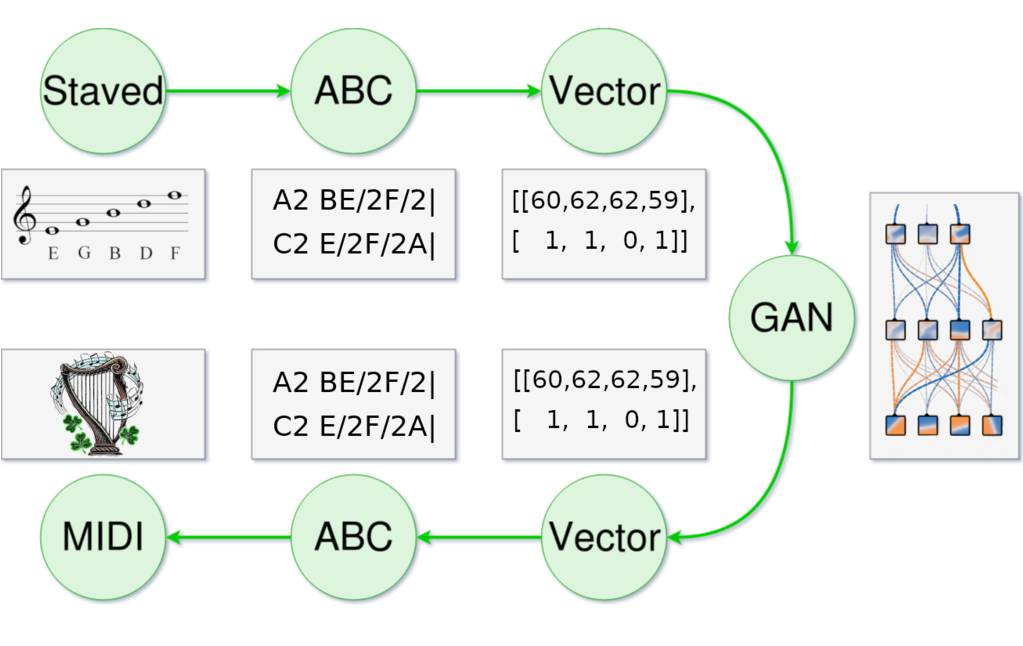

Machine learning models learn all sorts of things they weren't intended to learn. A consequence of that is that they also leak all sorts of information that wasn't meant to be leaked, from class information to private records to unintentional decision boundaries. I'm intrigued by how we can use these unintentional properties to learn more about how these models learn, how we can exploit these properties, and most importantly how we can protect against people trying to exploit them.